How to Build a CDP, DMP, and Data Lake for AdTech and MarTech

Data platforms have been a foundational part of the programmatic advertising and digital marketing industries for well over a decade.

Platforms like customer data platforms (CDPs) and data management platforms (DMPs) are essential for helping advertisers and publishers run targeted advertising campaigns, generate detailed analytics reports, perform attribution, and develop a deeper understanding of their audiences.

A third component — the data lake — acts as a centralized repository that stores all structured and unstructured data in one place. Data collected in the lake can then be passed to a CDP or DMP and used to build audiences, among other purposes.

This guide covers what CDPs, DMPs, and data lakes are, outlines scenarios where building your own makes sense, and walks through the key technical requirements and challenges involved in building each one.

When Does Building a CDP or DMP Make Sense?

Although there are many CDPs and DMPs available on the market, many organizations require a proprietary solution to maintain control over their collected data, intellectual property, and feature roadmap.

Building a CDP or DMP is worth considering in situations like these:

- An AdTech or MarTech company looking to expand or improve its core technology offering.

- A publisher wanting to build a walled garden to monetize first-party data and enable advertisers to target its audiences.

- A company that collects large volumes of data from multiple sources and wants full ownership of the technology and control over the product roadmap.

What Is a Customer Data Platform (CDP)?

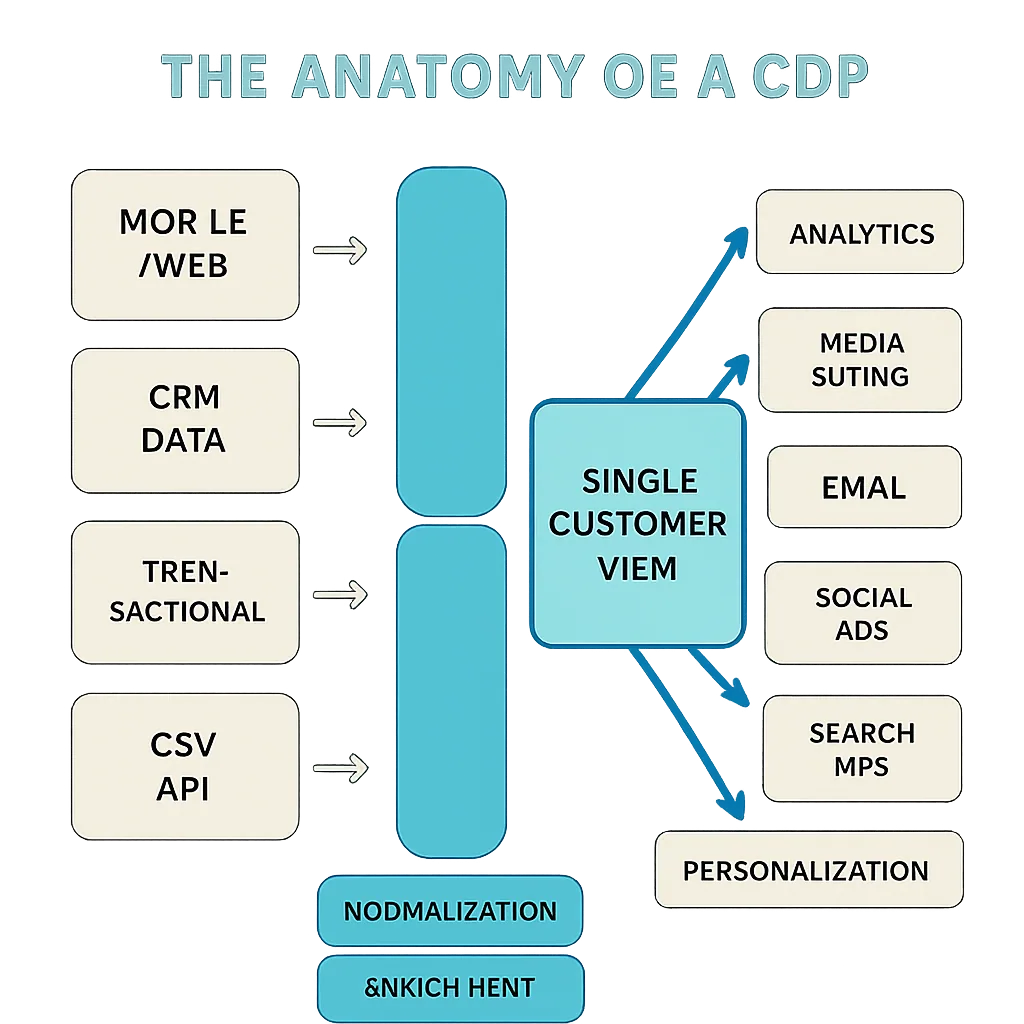

A customer data platform (CDP) is a category of marketing technology that collects and organizes data from a range of online and offline sources.

CDPs are typically used by marketers to gather all available data about a customer and aggregate it into a single database, which is integrated with and accessible from other marketing systems and platforms used across the organization.

With a CDP, marketers can view detailed analytics reports, create user profiles, audiences, and segments, build single customer views, and improve advertising and marketing campaigns by exporting data to other systems.

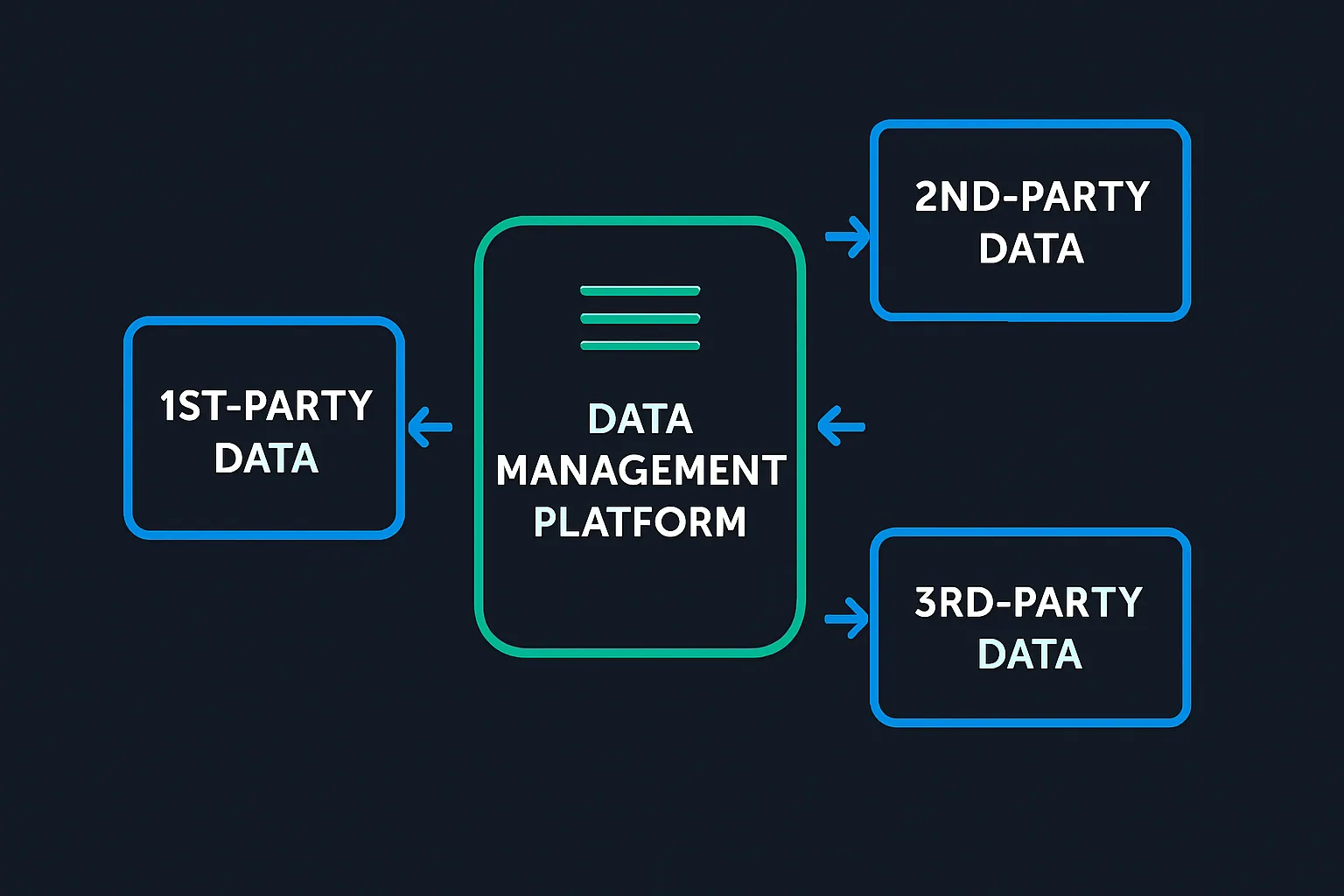

What Is a Data Management Platform (DMP)?

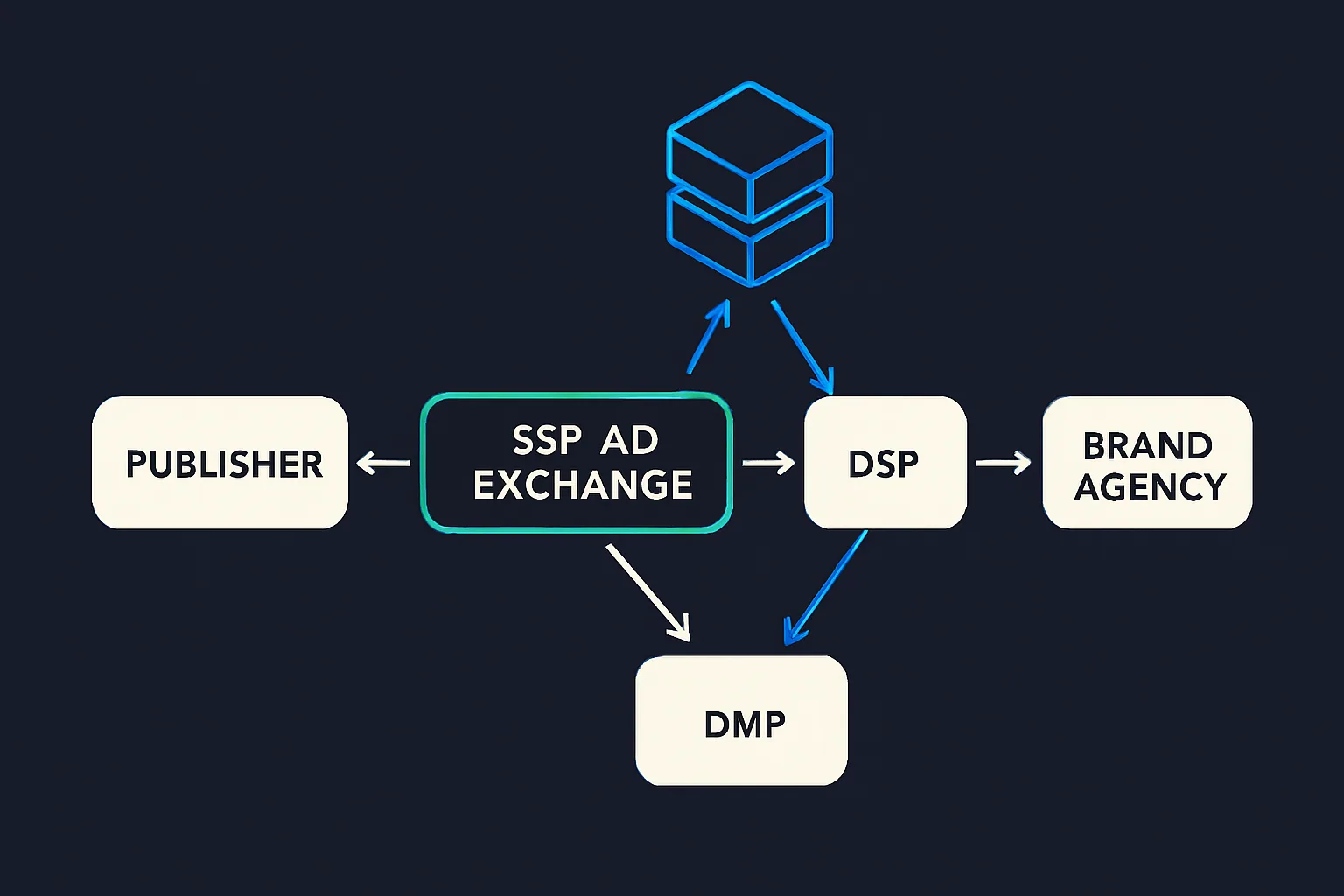

A data management platform (DMP) is software that collects, stores, and organizes data from a range of sources, including websites, mobile apps, and advertising campaigns. Advertisers, agencies, and publishers use DMPs to improve ad targeting, conduct advanced analytics, perform lookalike modelling, and extend audiences.

What Is a Data Lake?

A data lake is a centralized repository that stores structured, semi-structured, and unstructured data, usually in large amounts. Data lakes are often used as a single source of truth — meaning data is prepared and stored in a way that ensures it is correct and validated. A data lake also serves as a universal source of normalized, deduplicated, and aggregated data used across an entire organization, and typically includes user-access controls.

- Structured data: Data that has been formatted using a schema. Structured data is easily searchable in relational databases.

- Semi-structured data: Data that doesn't conform to the tabular structure of databases, but contains organizational properties that allow it to be analyzed.

- Unstructured data: Data that hasn't been formatted and remains in its original state.

| Structured data | Semi-structured or flat data | Unstructured and binary data |

|---|---|---|

| Databases | Logs, CSV, XLM, and JSON data | Audio |

| Emails | Video | |

| Documents | Image data | |

| PDFs | Natural language | |

| Web pages | Documents |

Many companies have data science departments or products (like a CDP) that collect data from different sources but require a common, shared source of data. Data collected from these different sources often needs additional processing before it can be used for programmatic advertising or data analysis.

Unaltered or raw-stage data — also referred to as bronze data — is typically preserved throughout this process. Keeping raw data available enables additional verification steps on sampled or full data sets, and also serves as a fallback for reprocessing historical data that wasn't fully transformed during a prior pipeline run.

What's the Difference Between a CDP, DMP, and a Data Lake?

CDPs and DMPs may appear similar on the surface, since both collect and store data about customers. There are important differences in how they operate, however.

CDPs primarily use first-party data and are built around real consumer identities, relying on personally identifiable information (PII). The data originates from various internal systems and can be enriched with third-party data. CDPs are mainly used by marketers to nurture an existing consumer base.

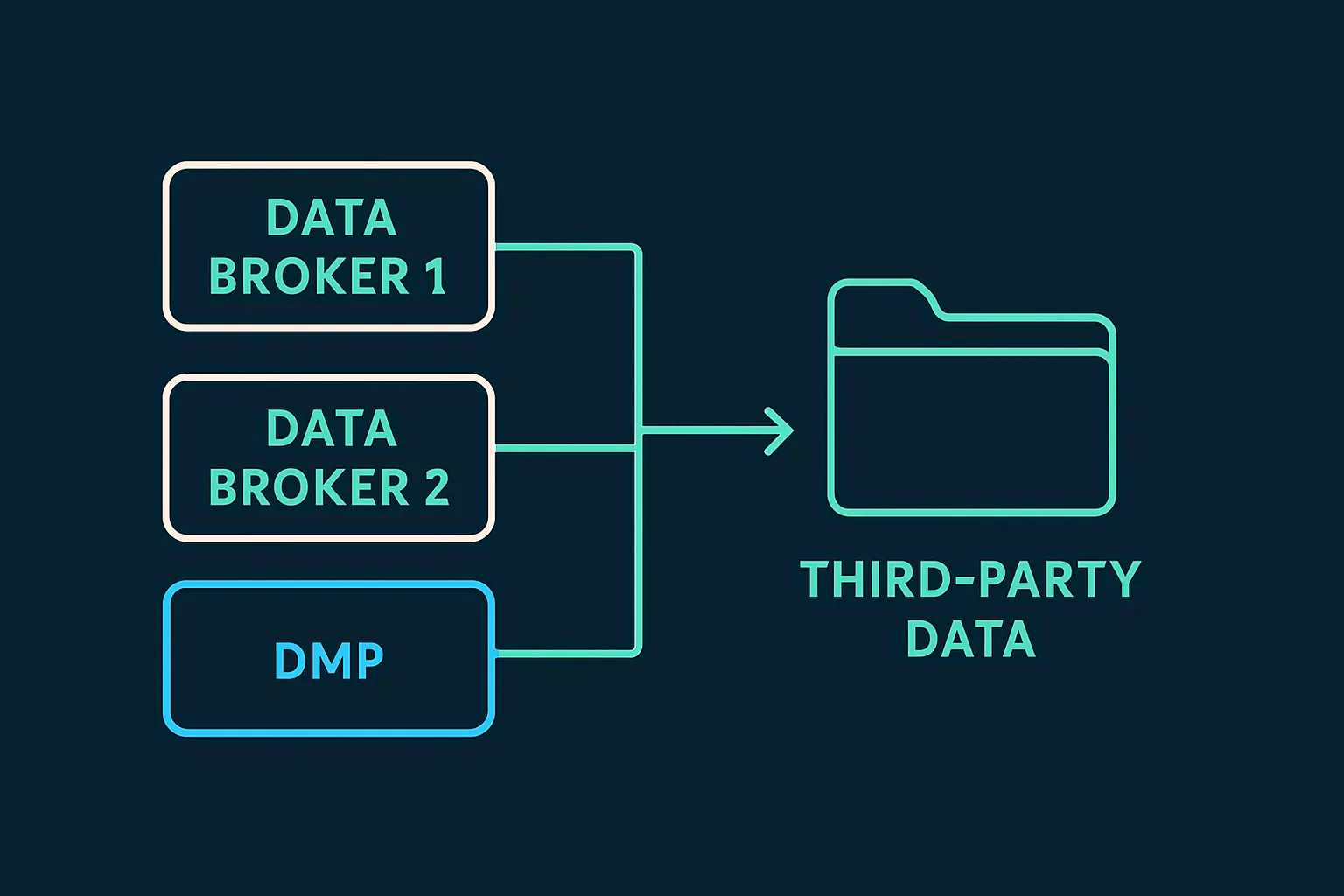

DMPs, by contrast, are primarily responsible for aggregating third-party data, which typically involves the use of cookies. In this sense, a DMP is more of an AdTech platform, while a CDP functions as a MarTech tool. DMPs are mainly used to enhance advertising campaigns and acquire lookalike audiences.

A data lake sits beneath both: it is the system that collects different types of data from multiple sources and feeds that data into a CDP or DMP.

| CDPs | DMPs | Data Lake |

|---|---|---|

| Focused on marketing (communicating to a known audience). | Focused on advertising (communicating to unknown audiences). | A centralized repository used for storing large amounts of structured and unstructured data, which are often pushed to a CDP or DMP and used for creating user profiles and audiences. |

| A CDP typically leverages first-party data, but can be enriched with third-party data. | A DMP typically leverages third-party data, with first-party data acting as an additional source of information. | The data in a data lake can consist of first-party, second-party, and third-party data. |

| CDPs primarily use PII and first-party data. | DMPs have traditionally used non-PII data, such as cookie IDs and device IDs. |

Popular Use Cases of a CDP, DMP, and Data Lake

| CDP Use Cases | DMP Use Case | Data Lake Use Case |

|---|---|---|

| • Audience creation and segmentation. | • Audience creation and segmentation. | • Data collection: Structured and unstructured data collection from multiple sources. |

| • Creating a single customer view (SCV). | • Audience targeting. | • Data integrations: It makes it easier to integrate new data sources. |

| • ID management (e.g. ID resolution and ID graphs). | • Retargeting. | • Analysis: Real-time analysis and reports. |

| • Predictive analytics. | • Lookalike modeling. | • Data operations: Polling and processing. |

| • Content and product recommendations. | • ID management (e.g. ID resolution and ID graphs). | • Security: Access control to authorized persons. |

| • Audience intelligence. | • Analytics: Provides the possibility of running analysis without the need for data transfers. | |

| • Audience extension. | • Cataloging and indexing: It provides easy to understand content via cataloging and indexing. |

What Types of Data Do CDPs, DMPs, and Data Lakes Collect?

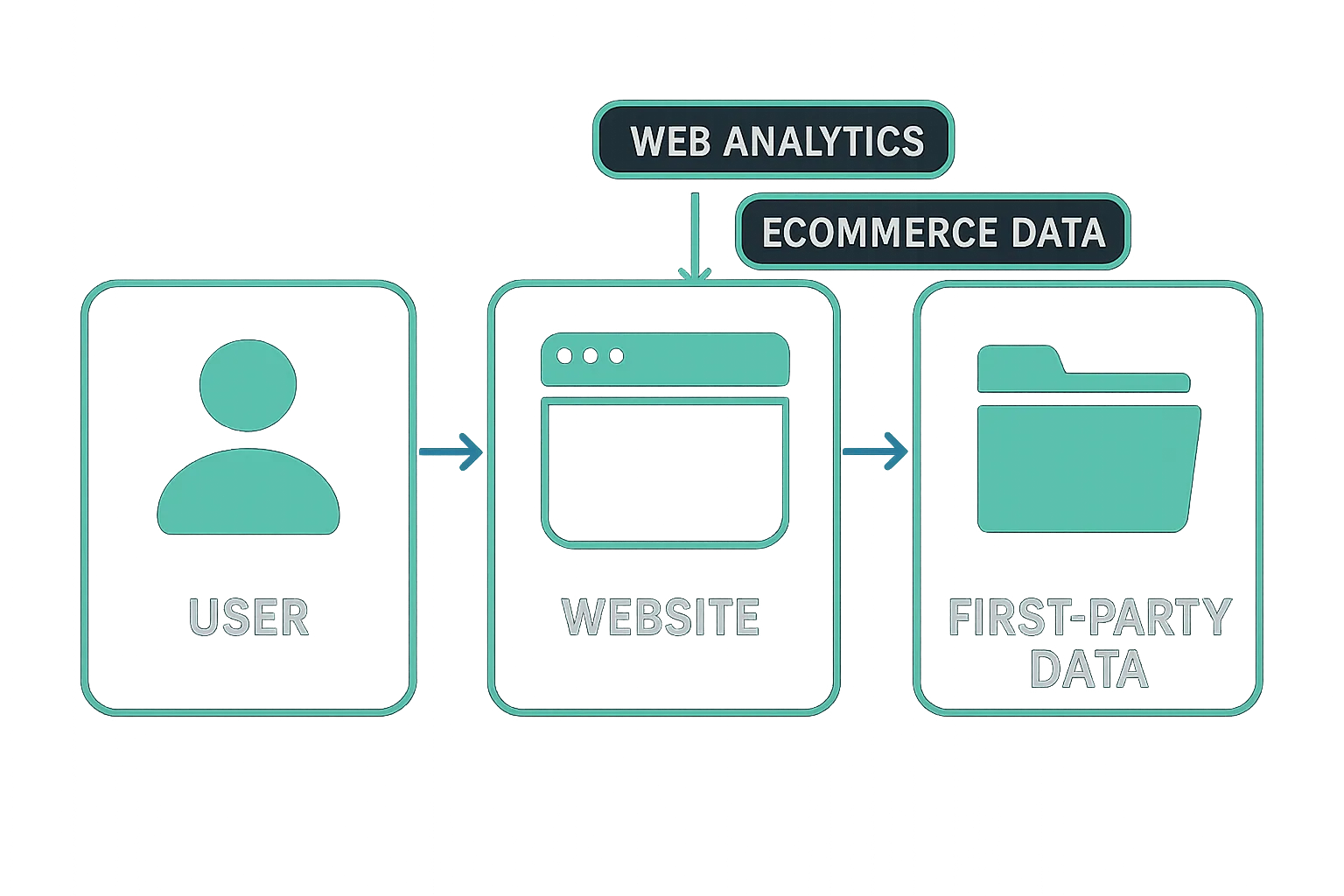

First-Party Data

First-party data is information gathered directly from a user or customer and is generally considered the most valuable form of data, because the advertiser or publisher has a direct relationship with the user — the user has already engaged and interacted with the advertiser.

First-party data is typically collected from:

- Web and mobile analytics tools.

- Customer relationship management (CRM) systems.

- Transactional systems.

Second-Party Data

Many publishers and merchants monetize their data by adding third-party trackers to their websites or tracking SDKs to their apps, and passing data about their audiences to data brokers and DMPs.

This data can include a user's browsing history, content interaction, purchases, profile information entered by the user (such as gender or age), GPS geolocation, and much more.

From these data sets, data brokers can create inferred data points about interests, purchase preferences, income groups, demographics, and more.

The data can be further enriched from offline data providers — such as credit card companies, credit scoring agencies, and telcos.

How Do CDPs, DMPs, and Data Lakes Collect This Data?

The most common data collection methods for CDPs, DMPs, and data lakes are:

- Integrating with other AdTech and MarTech platforms via a server-to-server connection or API.

- Adding a tag (i.e., a JavaScript snippet or HTML pixel) to an advertiser's or publisher's website.

- Importing data from files such as CSV, TSV, and parquet.

Technical Requirements and Challenges When Building a CDP or DMP

Both CDP and DMP infrastructures are designed to process large volumes of data — the more data available for building segments, the more valuable the platform becomes for its users (advertisers, data scientists, publishers, and others).

However, the larger the scale of data collection, the more complex the infrastructure setup becomes. Properly assessing the scale and volume of data to be processed is a necessary first step, since the infrastructure design depends heavily on these requirements.

Below are key requirements to consider when building a CDP or DMP.

Data-Source Stream

A data-source stream is responsible for obtaining data from users and visitors. This data must be collected and sent to a tracking server.

Data sources include:

- Website data: JavaScript code on a website monitors browser events. When a visitor takes an action, the JS code creates a payload and sends it to the tracker component.

- Mobile application data: This typically uses an SDK capable of collecting first-party application data, including user identification data, profile attributes, and behavioural data. User behaviour events correspond to specific actions inside mobile apps. Data sent from an SDK is collected by the tracker component.

Data Integration

Multiple data sources can be incorporated into a CDP or DMP infrastructure:

- First-party data integration: Includes data collected by a tracker and data from other internal platforms.

- Second-party data integration: Data collected via integrations with data vendors (e.g., credit reporting companies), which can be used to enrich profile information.

- Third-party data integration: Typically via third-party trackers, such as pixels and scripts on websites and SDKs in mobile apps.

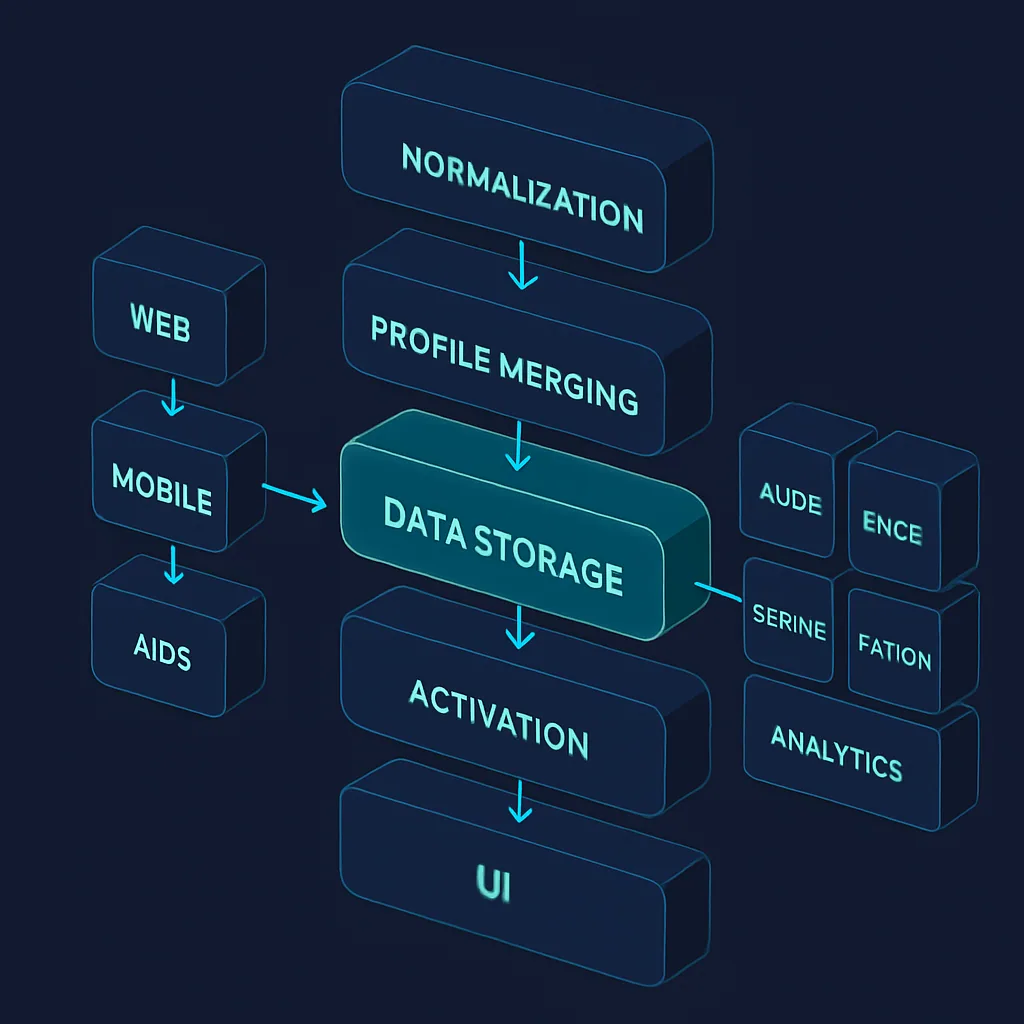

The Number of Profiles

Knowing the number of profiles that will be stored in a CDP or DMP is crucial for determining the appropriate database type for profile storage.

The profile database is responsible for identity resolution, which plays a central role in profile merging and proper segment assignment — making it one of the most important components of any CDP or DMP infrastructure.

Data Extraction and Discovery

A common use case for both CDPs and DMPs is providing data scientists with a reliable interface to a common source of normalized data.

A cleaned and deduplicated data source is a highly valuable input that can be used to further prepare data for machine-learning purposes. This preparation often requires creating a data lake where data is transformed and encoded into a form that machines can interpret.

Common data transformation types include:

- OneHotEncoder

- Hashing

- LeaveOneOut

- Target

- Ordinal (Integer)

- Binary

Selecting the right data transformation type and designing a sound data pipeline for machine learning typically requires collaboration between the development team and data scientists who analyze the data and define the machine-learning requirements.

Machine learning can also be applied to create event-prediction models, generate clustering and classification jobs, and aggregate and transform data. This can reveal patterns that are not immediately visible to a human analyst, but become clear after applying a transformation (such as a hyperplane transformation).

Segments

The types of segments a CDP or DMP needs to support will also influence the infrastructure design.

Segment types generally include:

- Attribute-based segments: Demographic data, location, device type, etc.

- Behavioural segments: Based on events (e.g., clicking a link in an email) and the frequency of those actions (e.g., visiting a web page at least three times a month).

- Machine learning–based segments:

- Lookalike / affinity: The goal of lookalike/affinity modelling is to support audience extension. The approach can be based on a variety of inputs and driven by similar objective functions. In practice, this creates a self-improving loop: profiles with high conversion rates are identified, affinity audiences are built from them, those audiences generate more conversions, and the cycle continues.

- Predictive: The goal of predictive targeting is to use available information to estimate the probability of an interesting event — such as a purchase or app installation — and target only the profiles with a high predicted likelihood.

Technical Requirements and Challenges When Building a Data Lake

Building a data lake comes with its own set of challenges:

- Combining multiple data sources to generate useful insights is difficult. IDs are typically required to bind different data sources together, but those IDs are often missing or simply don't match across sources.

- It's often unclear what data is actually present in a given data source. Sometimes even the data owner isn't certain what is contained within it.

- Data cleanup and reprocessing must be possible in the event of an ETL pipeline failure, which will occur at some point. This needs to be handled either manually or automatically. Databricks Delta Lake provides an automatic solution since its delta tables comply with ACID properties. AWS is also implementing ACID transactions in one of its solutions (governed tables), though at the time of writing this is only available in one region.

In the first step of processing, data is extracted and loaded into the raw stage. After this initial stage, multiple subsequent data lake stages are typically available depending on the use case.

The second step usually carries out data transformations such as deduplication, normalization, column prioritization, and merging. Further steps perform additional transformation layers — for example, business-level aggregations needed by the data science team or for reporting purposes.

By combining AWS components such as Amazon Lake Formation — which uses the well-known S3 storage mechanism — with Amazon Glue or Amazon EMR for ETL pipeline processing, it's possible to create a centralized, curated, and secured data repository.

On top of Amazon Lake Formation, Amazon Athena provides a common interface that can be used across multiple infrastructure components and offers a unified data access method to the lake.

Using IAM security methods adds an additional layer of access-level controls to the data lake. When a data lake is properly designed, data access can also be optimized for cost efficiency. The final aggregate level means required operations can be run just once during the ETL pipeline when needed, rather than repeatedly at query time.

Example Architecture: Building a CDP, DMP, and Data Lake

A complete implementation walkthrough covers the following areas:

- A list of the main features of a CDP/DMP and data lake.

- An example of the architecture setup on AWS.

- The request flows.

- The Amazon Web Services components involved.

- A cost-level analysis of the different components.

- Important implementation considerations.

The general architectural pattern involves a data collection layer feeding raw data into a lake, followed by staged transformation pipelines that normalize and enrich the data, with the processed output then made available to a CDP or DMP layer for audience creation, segmentation, and analytics. Choosing the right storage format, transformation tooling, and access controls at each stage will have a significant impact on both performance and operating costs as data volumes grow.